Scaling Parallel Graph Computing for Real-Time Robots

Understanding strong and weak scaling limits for real-time robotic planning

Why This Matters

Real-time robots increasingly rely on graph-based planning algorithms for collision avoidance, trajectory generation, and decision making. While parallelization can dramatically reduce computation time, it can also introduce performance variability — a serious risk for safety-critical systems.

This project investigates whether large-scale parallel graph search can deliver not just speed, but predictability, which is essential for real-time robotics.

Technical Challenges

- Determining the optimal core count for real-time responsiveness

- Understanding strong vs weak scaling behavior under robotic workloads

- Quantifying the impact of CPU contention in multi-robot scenarios

- Separating algorithmic limits from hardware-induced slowdowns

Approach

I implemented a hash-distributed, multi-goal graph search algorithm using MPI, representative of robotics planning workloads. The system was evaluated under both strong and weak scaling conditions on HPC hardware.

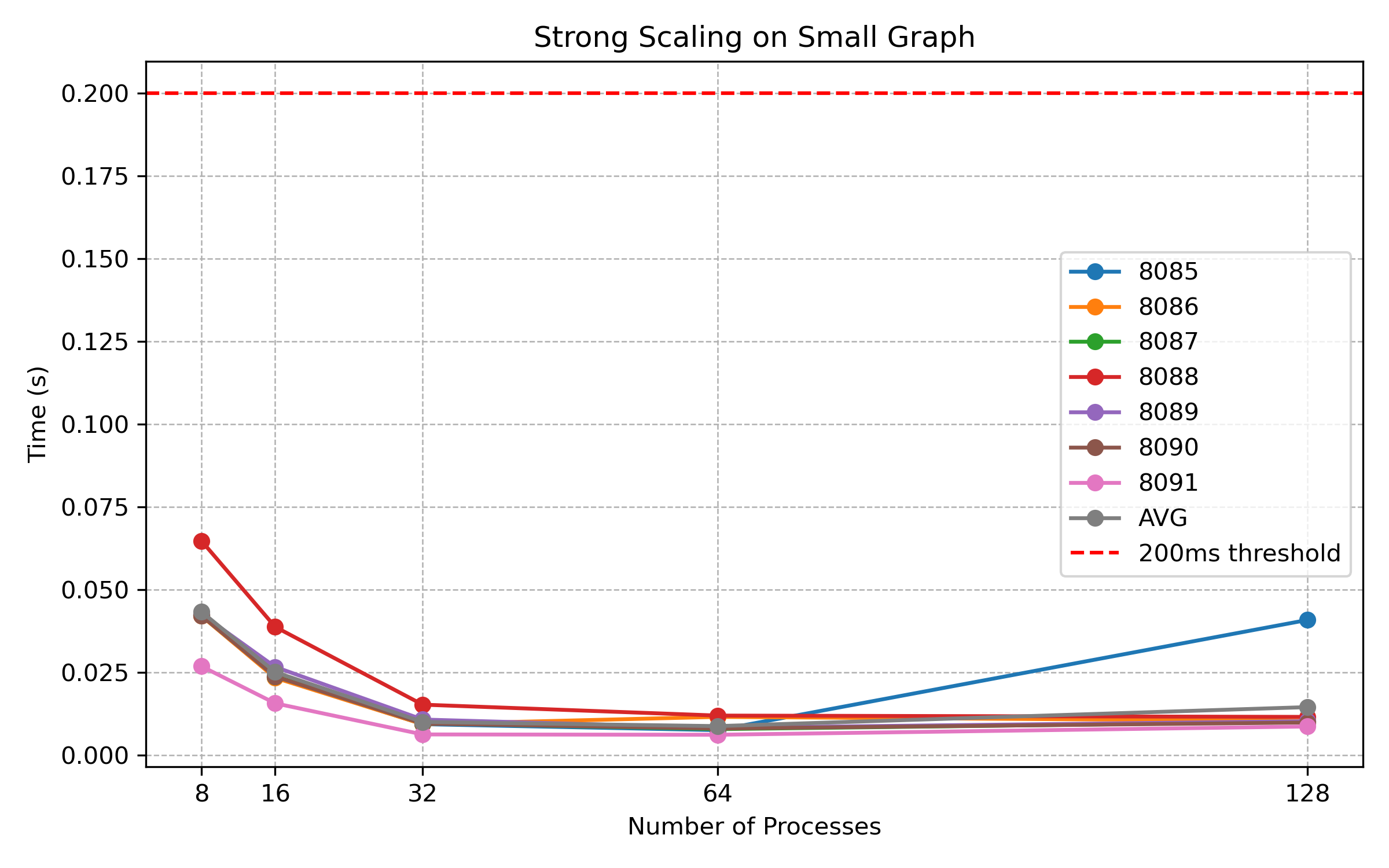

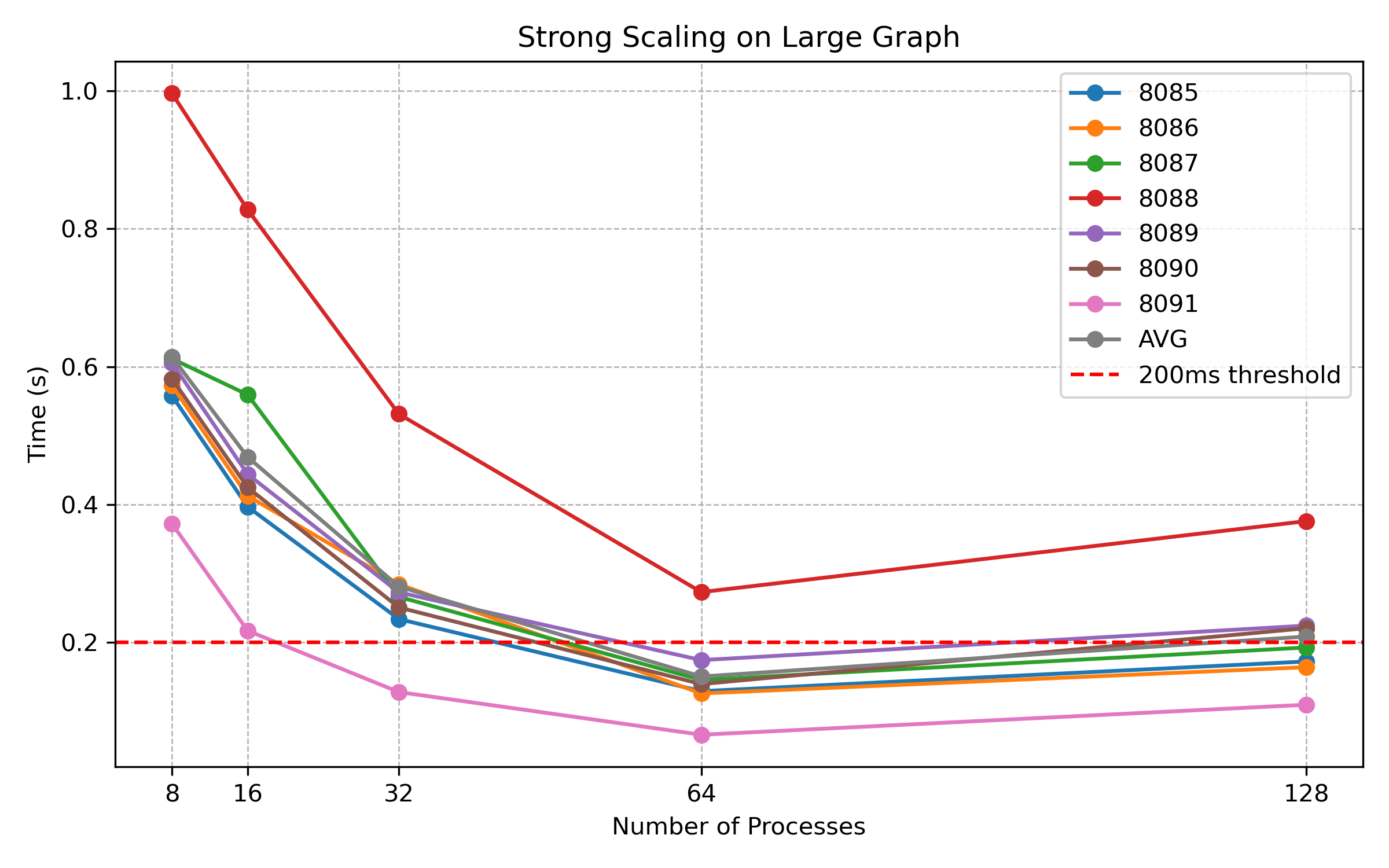

- Strong scaling experiments across fixed graph sizes

- Weak scaling experiments from 32 to 128 cores

- Latency-focused evaluation instead of throughput-only metrics

- Simulation of multi-robot servicing on shared compute nodes

Key Findings

- Strong scaling peaks at 64 cores before diminishing returns dominate

- Weak scaling remains highly linear, favoring predictable response times

- 32-core configurations provide the most reliable real-time performance

- CPU sharing across robot clients causes severe and unpredictable slowdowns

These results show that maximizing parallelism is not always beneficial. Instead, carefully bounded resource allocation is essential for real-time guarantees.

Design Insight

For real-time robotics, predictability outweighs raw throughput. This study suggests that future data centers supporting robotic workloads should allocate dedicated compute resources per robot, rather than sharing CPUs across multiple clients.

Recommended Resource Allocation:

- 1 × 32-core CPU per robot for small graphs

- 2 × 32-core CPUs per robot for large graphs

This approach trades some efficiency for the consistency required by safety-critical robotic systems operating under hard real-time constraints.